TWH – An unknown number of Pagans have reported that Facebook has put them in “Facebook jail.” That metaphorical incarceration refers to a temporary suspension of posting ability on Facebook.

Facebook’s violation standards and processes associated with a violation seem opaque and confusing. As an example of these decisions and processes, The Wild Hunt reported last year on an exhibition at Raymond Buckland’s Museum of Witchcraft and Magick featuring the photographs by William Mortensen. The photographs were immediately flagged by Facebook algorithms for violating its community standards. TWH requested a review of the image and the representative permitted its publication. Manny Tejeda-Moreno, Editor-in-Chief of The Wild Hunt, said “We received no specifics to how the decision was made or what was offensive about the image. We surmised it was the shocking reality that nipples exist and a misogynist algorithm affirming only certain people are allowed to show them.”

“The preparation for the Sabbath has been a favorite and frequent theme of the artists who have dealt with this material. The young witch, eager and exuberant, is being rubbed by the old witch with a magic ointment. By the virtue of this salve, according to tradition, the witches were enabled to fly to their assemblies.” – William Mortensen 1935. Image courtesy of Stephen Romano Gallery

The issue has affected other communities as well. Several years ago, The Guardian reported on Facebook censorship of images from Indigenous communities. This bafflement occurs to both those accused and those accusing others of violating Facebook’s Community Standards.

The Wild Hunt has explored the Facebook violation process. This is the first part of a two-part report.

What is Facebook “Jail”

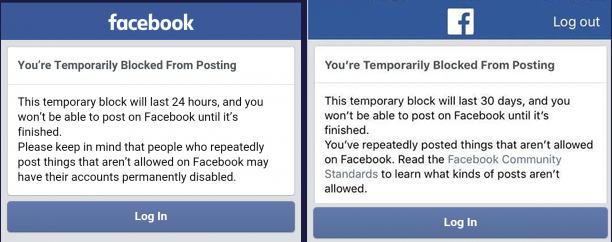

For minor violations of Facebook’s Community Standards, Facebook “jail,” lasts for 24 hours, but can extend longer. Users receive some version of the following messages depending on the length of their “sentence”.

While in Facebook “jail,” the user can only view posts. They cannot interact with anyone else on Facebook. If a Facebook user has repeated serious violations on their “record,” Facebook may suspend their account. Facebook users in “jail” can appeal to Facebook.

The Community Standards of Facebook

Facebook has developed a complex set of “Community Standards.” All posts throughout the world must meet these standards regardless of cultural standards or norms or even definitions of what might be part of each of the domains listed.

Facebook’s standards consist of six domains:

1. Violence and Criminal Behavior

2. Safety

3. Objectionable Content

4. Integrity and Authenticity

5. Respecting Intellectual Property

6. Content Related Requests

Facebook has further divided these six domains into 26 sub-domains. Some of these Standards are designed to disrupt online recruiting by hate groups and terrorists. Others strive to constrain posts that promote or support mass murder or self-harm or exploitation.

How some algorithms work

The algorithms are complicated to resolve in various categories. For the sake of explanation, we will look at the algorithms related to hate speech.

Facebook defines an algorithm as “a formula or a set of steps to solve a particular problem.” Facebook publicly uses two algorithms to filter out hate speech before they become visible to users. We, in fact, do not know how many algorithms are being used; but we do know of the existence of these two.

Facebook has developed a database of identified hate speech. One algorithm compares “strings” (a specific pattern of words) from a pending post to this database of identified hate speech. If those word strings exactly match word strings in the database, Facebook will block that pending post from becoming visible.

The second algorithm assigns a score to each pending post. Scores are classified into three ranges. If a pending post would score in the high range, Facebook would block it from becoming visible. If it would score in the middle range, Facebook would send it to a content review team. If it would score in the low range, Facebook would allow it to become visible.

According to Facebook, when it finds a violation, it will notify the user who created it. That user can request a review. If they request a review, the Content Review Team will review the pending post typically within 24 hours. If that review finds that no violation had occurred, Facebook will notify the user. It will then allow the blocked post to become visible.

Content review teams

Human teams make the final judgment. According to The Drum, Facebook had 15,000 human content reviewers in 20 centers around the world, as of June 2019. These 15,000 reviewers examine 2 million posts each day. In the period from October 2018 to June 2019, they removed 7.3 million hate speech posts.

The individuals manually reviewing flagged content and its strain may also be important. The Verge reported on the emotional impact of reviewing Facebook’s standards among the Phoenix Content Review Team. Facebook did not employ these workers; they were “temps.” As Verge reports, “It is an environment where workers cope by telling dark jokes about committing suicide, then smoke weed during breaks to numb their emotions. It’s a place where employees can be fired for making just a few errors a week — and where those who remain live in fear of the former colleagues who return seeking vengeance.” It is not clear how this environment and their temporary status influence judgments about the algorithm’s decisions.

Over the last few years, public scrutiny of Facebook and other social media has increased because of growing knowledge about social media’s impact on marketing and political processes as well as data collection and breaches, perhaps most notably from the Cambridge Analytica case.

In part 2 of our exploration of how Facebook and other social media enforce community standards, we’ll look at how those standards might specifically impact Pagans and our spiritual and religious expressions.

The Wild Hunt is not responsible for links to external content.

To join a conversation on this post:

Visit our The Wild Hunt subreddit! Point your favorite browser to https://www.reddit.com/r/The_Wild_Hunt_News/, then click “JOIN”. Make sure to click the bell, too, to be notified of new articles posted to our subreddit.